Abstract

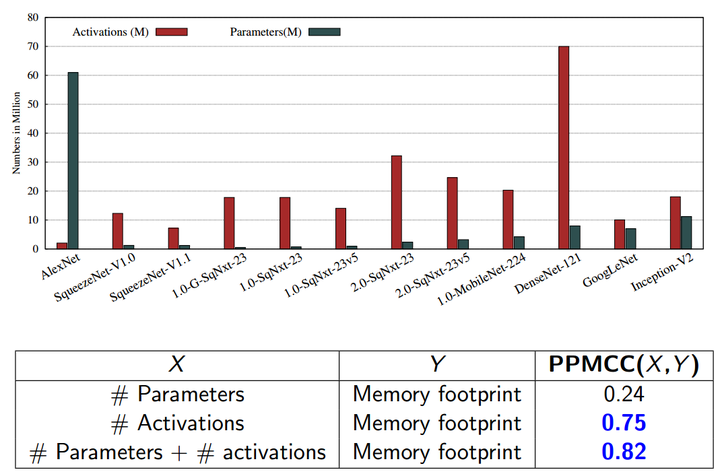

The recent trend in deep neural networks (DNNs) research is to make the networks more compact. The motivation behind designing compact DNNs is to improve energy efficiency since by virtue of having lower memory footprint, compact DNNs have lower number of off-chip accesses which improves energy efficiency. However, we show that making DNNs compact has indirect and subtle implications which are not well-understood. Reducing the number of parameters in DNNs increases the number of activations which, in turn, increases the memory-footprint. We evaluate several recently-proposed compact DNNs on Tesla P100 GPU and show that their “activations to parameters ratio” ranges between 1.4 to 32.8. Further, the " memory-footprint to model size ratio" ranges between 15 to 443. This shows that a higher number of activations causes large memory footprint which increases on-chip/off-chip data movements. Furthermore, these parameter-reducing techniques reduce the arithmetic intensity which increases on-chip/off-chip memory bandwidth requirement. Due to these factors, the energy efficiency of compact DNNs may be significantly reduced which is against the original motivation for designing compact DNNs.